Taskstream: Faculty-Friendly Scoring Experience

Taskstream and AAC&U partnered up to allow hundreds of faculty and administrators to participate in a Multi-State Learning Outcome Assessment. This was a great opportunity for Taskstream to create a simpler and more faculty-friendly scoring experience.

2.5 month long User Centered Design Consultancy including UX Strategy, exploratory user research and design proposal to simplify the scoring experience in educational assessment software.

As a UCD consultant I conducted user research to help Taskstream’s Product Team define their MVP. I also provided design principles, an interaction framework, and created and tested low and medium fidelity interactive prototypes.

Heuristic Evaluation, Contextual Inquiry, Affinity Diagrams, Competitive Analysis, Artifact Analysis, Personas, White-boarding, Prototyping, User Testing.

Intro

On the path to developing a product that provides a simpler path to more meaningful assessment, Taskstream partnered with the Multi-State Collaborative (MSC) Project and tasked its product team with creating a new scoring experience that supported the needs of the project.

The goal was to provide a great experience for the scorers from 69 colleges & universities distributed across the country that were each expected to score approximately 75 student assignments in a system they had never used before.

In the words of Jeff Reid (VP of Product) “Our research and our own experience with customers were telling us that to make direct assessment scalable and sustainable, the system had to be remarkably simple for users each time they touched it.”

As got involved in the project as User Centered Design (UCD) consultant my first task was to learn as much as possible about Taskstream’s domain (assessment) and the users we were aiming to serve.

Research & Synthesis

Taskstream has certainly gained lots of experience in their 16 years as a market leader, so we wanted to make sure we leveraged all that domain knowledge, and also learned from what had not worked in the past. I started by interviewing customer service associates, sales representatives, listened to implementation calls and conducted a heuristic evaluation of their current scoring experience.

How easy can we make this?

Taskstream had done a great job supporting its diverse customer base by building a very powerful and flexible system, but in order to accommodate this endless amount of workflows, the system had developed a bad case of ‘feature-creep’ and a heavy dependency on customer service over the years. It was time for a refresh!

During this first exploration it became clear that the process of assessment was inherently complex, and that each university and institution had very specific ways of approaching it, making the amount of possible workflows to support virtually endless.

As the product team and I started working together, we asked ourselves: “How easy can we make this?”

We realized that, in order to ship a truly valuable experience in the next 3 months we will need to define our scope smartly by identifying the common challenges among this diverse customer base, follow the 80/20 rule and focus on the challenges remote evaluators face when scoring student work. This new approach meant challenging many common assumptions within the company so having some strong user data to back up our decisions became all the more essential.

We conducted contextual inquiries with a varied set of “power” evaluators who spend the better part of their days scoring student work. We sought first to understand how they view student work and use and view the rubrics, but also learned about a host of other tips, tricks and tools they use to manage productivity.

The legacy scoring system had become increasingly complex

As the product team and I started working together, we asked ourselves: “How easy can we make this?”

Insight

We synthesized the information gathered during our interviews in personas and main insights that will serve as guiding principles for our designs.

Some of our main takeaways:

* Scoring process is a fluid back and forth between the student work and the rubric, however, the scorer’s main point of focus should always be the student work.

* Power users become rapidly familiar with the rubric and at that point they refer to it only to score, but not so much to review the specifics of the rubric.

* Every school has a well defined scoring policy. The system should include such policy rules as defaults in order to remove unnecessary cognitive load from scorers and avoid unnecessary decision points.

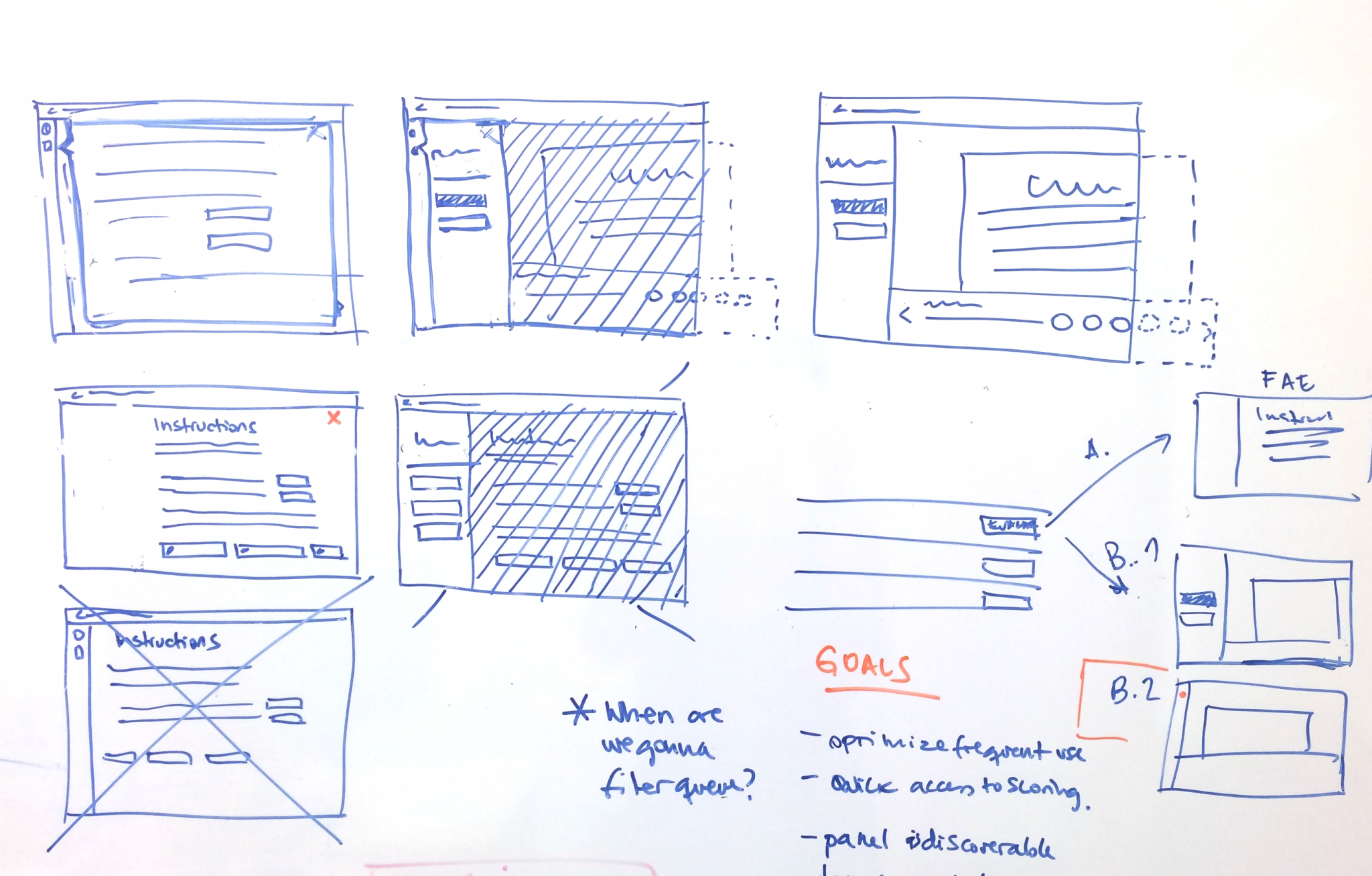

These insights made their way into paper prototypes, which then evolved into electronic versions that we quickly tested with some users.

This first round of testing largely validated our general approach, but most importantly, it helped identify some potentially confusing interactions that we then reworked to create a more reassuring experience for evaluators about to submit final scores.

Personae flashcards

The solution included a layout that put student work front and center, while allowing evaluators easy access to a scoring tool that easily expands to present rubric details on demand.

This allowed:

- Evaluators to quickly go back and forth between the student work and the rubric.

- Power users who are familiar with the rubric to hide unnecessary details.

To account for novice users, the solution also included a first user experience that presented evaluators with an expanded version of the rubric.

Before and after

Results

So, how easy did we make it?

Later in February, the product team at Taskstream was invited to join an evaluator training session AAC&U hosted for Multi-State Collaborative participants to provide a 30-minute presentation and Q&A session for evaluators about our new system, after which the participating evaluators went home to their campuses and began scoring a few weeks later.

At the end of the scoring period we sent out a survey to all the evaluators and the feedback was remarkably positive. When asked, on a 5-point scale (very hard to very easy), the overwhelming majority (over 90%) indicated that key tasks around reading, scoring and interacting with the rubrics were “easy” or “very easy.” But it was the comments we received that told us we were on the right path to achieve our goal.

“I was impressed by the design of this process. Functions were intuitive, everything was easy to find and use. The interface made the most of screen real estate for easy reading and referencing of criteria.

“It’s way more convenient and a lot less stressful (stacks of paper on my desk = stress) . It allowed me to stay organized and keep track of my progress easily. It also saved a lot of trees! I really liked it!”

Most important of all, AAC&U and SHEEO considered the pilot a success and were able to expand participation across states for the upcoming “Demonstration Year.”

Janine Fusco presenting an overview of the scoring experience during an AAC&U training event.

Aqua emerges

A new product emerges: Aqua

The feedback received and the mounting interest from institutions both inside and outside of the MSC helped sharpen Taskstream’s vision for a product that would allow institutions to make the daunting work of assessing student learning outcomes more achievable.

The scoring functionality was only one phase, of an assessment project lifecycle. To make this useful for a campus to apply to local efforts, Taskstream needed to support more of the overall workflow. They wanted to give those accountable for assessment efforts (such as assessment coordinators and directors) a similarly simple set of tools they could use to easily manage and track their initiatives. So after a few months I came back to Taskstream as a their full-time UX Lead to take on the challenge and started our next big Project: Aqua.

* Disclaimer: parts of this text were taken from a great blog post written by Taskstream’s VP of Product Jeff Reid, where he talks about the experience of creating Aqua. Check out the full post here.